First, please take this quiz on when the first computer was invented.

Computer History

How much do you know about computers? How long have they been around? How did we develop the technology?

You might be surprised to know that the ancient Greeks almost had the technology for making computers, but the knowledge was lost and not rediscovered for almost 2000 years. You might be surprised how early a working, modern computer was designed, and who wrote the programs for it. Did you know that cloth production came up with a key computer technology?

Did you know that computers changed the course of history during WWII?

A modern computer is a device which can receive information, process that information based upon the instructions of a program, and then return that information to the user.

This requires five basic elements of the system:

- Input: the computer must be able to receive data from the "real world" and convert that data into a form it can manipulate;

- Process: the computer must be able to change the data based upon instructions in a program;

- Programmability: the computer must be able to accept new instructions

- Storage: the computer must be able to retain the data it is given

- Output: the computer must be able to return the processed data to the user

Early on, devices to help with calculation and data handling had some but not all elements of this system. It was only in the past few hundred years that we began to develop what we now think of as "computers."

Before the development of the modern computer, in fact, the term "computer" referred to a person: someone who did mathematical computations. In the Manhattan Project in the 1940's, for example, "computers" were people (mostly women) who were given the job of doing the routine, "mechanical" work of calculation and checking.

However, when was the first "true" computer developed? Let's look back.

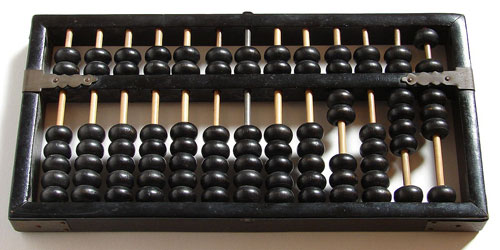

The Abacus

ca. 2500 B.C.: the Abacus could qualify as a "computer" chiefly because it is a mechanical device, and it assists in making mathematical calculations. Used for close to five millennia since ancient Mesopotamia, the abacus allows for easy addition and subtraction, and using special techniques, multiplication, division, and even square roots.

The Abacus has several of the features associated with computers: input, output, and storage (temporary). However, processing is done by the human mind, and the device cannot be programmed. Therefore, this is a not really a computer in the modern sense, but instead a limited device.

The Antikythera Mechanism

Sometime in the 1st Century B.C., about 2100 years ago, a ship sailed to Rome from Greece, or perhaps from Rhodes, an island off the coast of Turkey. The ship was laden with Greek treasure. However, the ship sank off the coast of the island Antikythera, between Greece and Crete.

Then, in April 1900, nearly two millennia after the ship sank, it was found. Many historical treasures were recovered, but one was mysterious: a corroded chunk of bronze. It was not until 1951 that it was understood to be an astronomical clock, and only in the last 10 to 20 years has technology been able to reveal the inner workings.

A complex clock-like device, the Antikythera Mechanism was built as a kind of mechanical calculator. By turning a handle, one turns the gears within the device. Upon so doing, one can see the position of the sun, the moon, and five planets in the sky known to the Ancient Greeks. The machine also accurately predicted moon phases, and even eclipses.

The precision of the device (based at least in part upon advanced Greek mathematics) and the painstaking detail of the craftsmanship showed a level of technology that did not appear again until 1600 to 1800 years later!

Who built the Antikythera Device is unclear. Archimedes was supposed to have built two of them, but both were identified as being in Rome after the one found 2000 years later was taken. Another craftsman, Posidonius, was said to have built one, but the timing of that machine also did not match. There may have been several copies of the same machine at the time.

Although it is commonly called an "Ancient Computer," the Antikythera Mechanism was not a full computer, by the full modern definition. It could not be programmed, except in its initial construction. However, the device came close, and was an amazingly advanced marvel of engineering—something that would not be surpassed for almost two thousand years!

If you've seen the latest Indiana Jones movie, The Dial of Destiny, you might have noticed that they called the device they found "The Antikythera Mechanism." While the name and the basic idea are similar, the Antikythera shown in the movie is completely fictional.

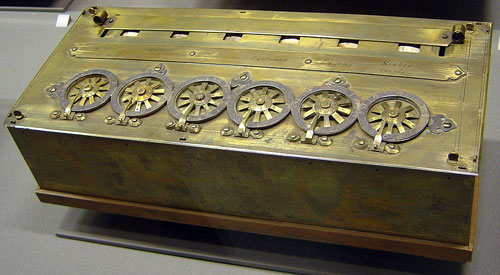

The Pascaline

In the 1640's, noted French French mathematician and physicist Blaise Pascal created an adding machine which he called the Pascaline.

Less complex than the Antikythera Device, the Pascaline was still notable in how it used mechanical means to perform addition, subtraction, multiplication, and division. It formed the basis for many other calculating devices for hundreds of years hence, and eventually helped lead to the creation of modern-day computers.

However, this device also was not a computer, as it also was not programmable. The lack of programming was a big part of what kept all of these older devices outside the full modern definition of "computer."

The Jacquard Loom

Interestingly, the solution to creating computer programming came from the field of textiles—the production of cloth. Cloth was created on looms. Originally, only human workers could use looms to create fabrics with complex designs. Automated machines could not do this; they were very limited in the types of cloth they could produce.

The Jacquard Loom was created in 1801. This new system was invented by Joseph Marie Jacquard. It introduced the use of punch cards, cards with holes punched in them to indicate values. The holes in the punch cards for Jacquard's loom indicated when a colored cloth would be used in order to create a design or pattern in the cloth. Based upon earlier designs beginning as early as 1725, this loom not only changed the production of cloth, but the future of computer design. This would be the first solution to allow devices which could not just calculate, but do so according to programs that one could change at any time.

Thus, despite being a loom, the machine was able to accept programs. While it was not a computer, it introduced the missing technology required to create modern computers. The practice of using punch cards was used well into the 20th century with electronic computers.

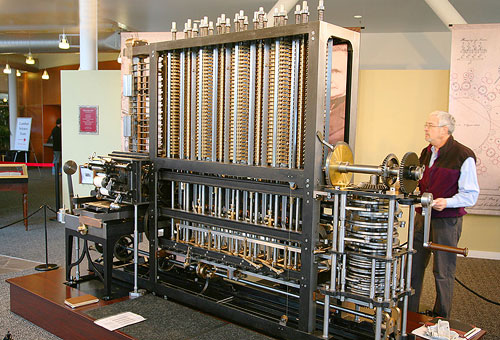

The Analytical Engine

The first device that could be thought of as a fully-featured modern computer came about from research done by Charles Babbage.

Babbage began by designing a mechanical calculator and tabulator to produce mathematical and astronomical tables, which were very difficult to produce reliably. This first design was not a full computer, as it was not programmable. Babbage called the machine the Difference Engine.

The British government, which funded the project, gave up when the project became too expensive, and Babbage never built the full machine. That was done in 2002, 160 years after Babbage abandoned the project.

His next project, however, was quite different: the Analytical Engine. By 1834, Babbage proposed the more ambitious design: a machine that could perform complex mathematical calculations based upon programs entered by punch cards. Babbage completed a final design by 1849, and worked on the engine until his death in 1871.

Joining Babbage was the world's first computer programmer, Ada Lovelace. The daughter of famous poet Lord Byron, her mother was a mathematician, so Ada learned the subject as well. She met Babbage in 1833, and worked on producing mathematical work to create software for the Analytical Engine design.

Although the Analytical Engine was never fully built, Lovelace nevertheless successfully worked out the idea of algorithms and wrote the first computer program, one which was designed to calculate Bernoulli numbers, an important set of mathematical values.

Although the computer that Ada Lovelace wrote her program for was never built, her algorithms and program were sound. However, computers capable of carrying them out would not be created for another hundred years, by which time her work had been forgotten. Much of it had to be re-created; only a decade or so later, in 1953, were her original writings republished.

The Algorithm

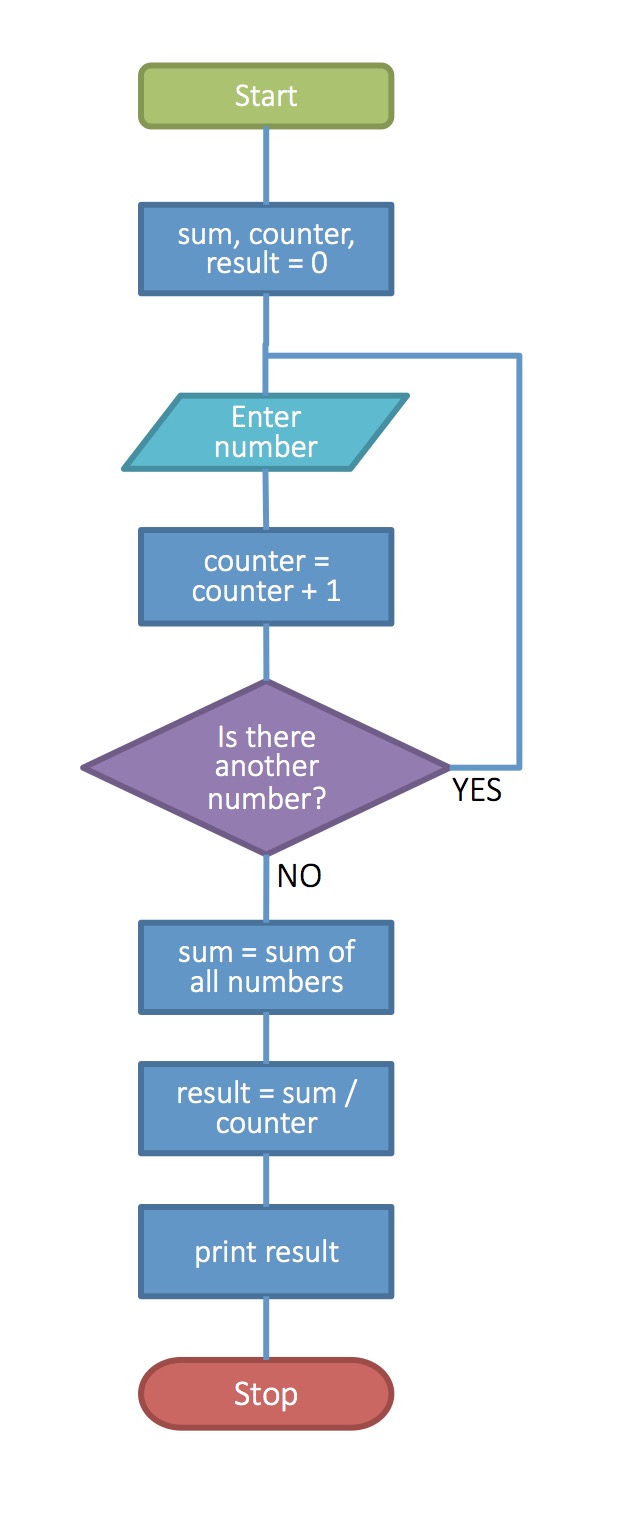

So, what is an algorithm, and why is it important?

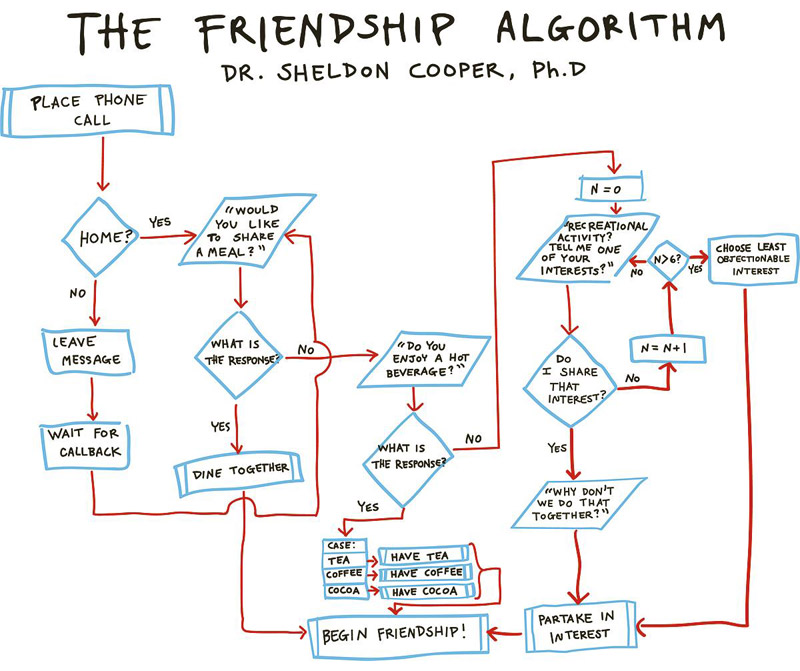

An algorithm is a logical series of steps (instructions) to achieve a goal. Algorithms can be diagrammed in the form of a flowchart, with a different shape for each type of action taken. A flowchart helps to make sure that the algorithm covers all possibilities to achieve the goal.

Algorithms are the basic elements of computer programs; any program will have a number of algorithms—sequences of instructions to achieve various outcomes, which when combined together form a whole program.

Algorithms might typically be used to create functions, which accomplish a specific task in a program. For example, one task might be to find the average of a set of numbers. The algorithm to do this can be seen in the illustration at right. Algorithms can be used to describe any process.

You may have seen algorithm flowcharts before; they are a series of boxes describing a set of actions with various conditional points. For example, there might be a diamond-shaped box with the question, "Is it raining?" and two arrows going out of the box, one labeled "Yes," and one labeled "No." The "Yes" arrow leads to an action box which reads, "Take an umbrella," while the "No" arrow leads to a box reading, "Enjoy the nice day!"

Below is an example of an algorithm written for a comedy TV show, The Big Bang Theory. In the show, Dr. Sheldon Cooper is a brilliant scientist, but is terrible at simple human interactions. He has trouble making friends, so he writes an algorithm for the process. Although it is intended for a person to follow, it is written in the style of a computer program. In this algorithm, you call someone on the phone, and (with a conditional to deal with an answering machine), ask them if they wish to have a meal together, to share a drink, or to go and do some shared interest. Notice the counter "N" on the right side: each time you ask the other person what their interest is, 1 is added to the counter; if 6 interests are expressed but none are shared, a decision is made to choose the least disliked of the six. At the end, some action is taken, and a friendship begins.

Who Was First?

There is a certain amount of debate about who created the first true electronic computer. There are four major contenders:

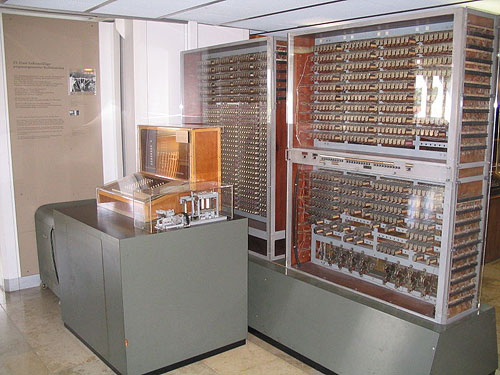

- Konrad Zuse, German inventor of the Z3 computer

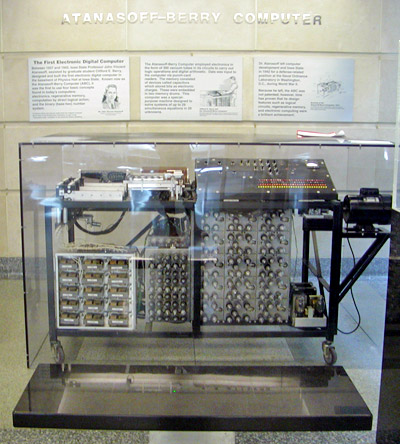

- John Atanasoff, and the Atanasoff–Berry computer (ABC)

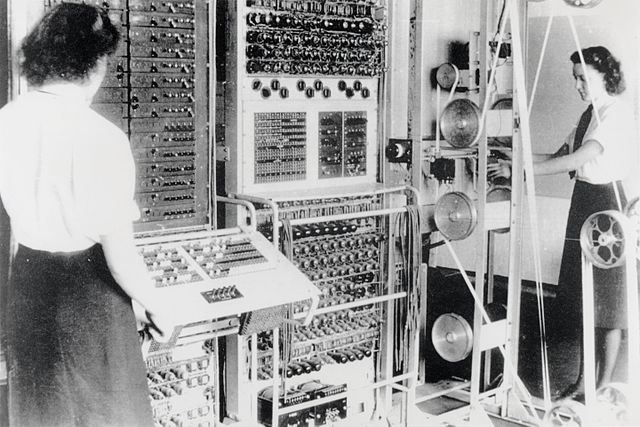

- Tommy Flowers and the top-secret Colossus in England

- Mauchly and Eckert with the American ENIAC

The Z3 Computer

In 1941, Konrad Zuse built the Z3, which is described as the world's first working programmable, fully automatic digital computer. However, the Z3 was not fully electronic, relying still on various mechanical parts. However, it is considered to be the first Turing-complete computer (although in a limited way). The Z3 was destroyed in a bombing raid in 1943.

The Atanasoff–Berry computer (ABC)

John Atanasoff first came up with the idea for his computer in 1937, and built the ABC, or the Antanasoff-Berry Computer between 1939 and 1942. This computer was not fully programmable—it was not Turing-complete. However, it was the first fully electronic computer, and the first to use binary digits. It was simply limited in what programs it could carry out.

The Colossus

While Alan Turing and others were using their versions of mechanical computers called "Bombe," Tommy Flowers was heading a project to build a fully electronic, binary, Boolean-based programmable computer. Ten such Colossus computers were built, with impressive speed and power for their time. However, these computers were specifically built for code-breaking, and so they were not Turing-complete. In addition, because of their top-secret nature, the computers were destroyed after the war and were not made public until 1970.

The work done by Turing's Bombe and Flowers' Colossus computers was vastly influential. They broke the "unbreakable" German codes, and gave England and its allies invaluable information which helped them win the war. It could easily be argued that, without the work done by these computers, World War II could have ended very differently.

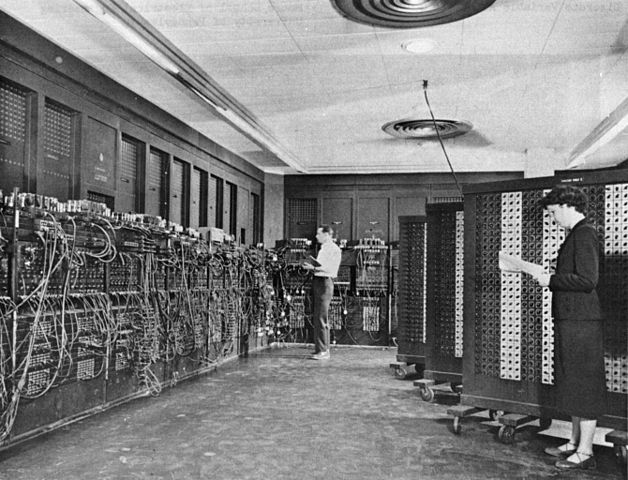

ENIAC

Work began on ENIAC in 1943, and it was completed in 1946. The computer had more than 17,000 vacuum tubes, and could perform 5000 base-10 addition problems per second. It was the size of a small building with 167 square meters of floor space (the same as a fairly large house in Japan, or a square about 13 meters on a side).

The computer was fully electronic, fully programmable and Turing-complete. In this sense, the ENIAC was perhaps the first fully electronic modern general-purpose computer.

In comparison, none of the other three computers mentioned above were fully programmable, and two were not fully electronic. Not to mention that the Z3 and Colossus were not known to the public and were both destroyed. So, in that sense, ENIAC seems to be the winner.

However, it was later found that it was not a fully original design: John Mauchly, one of the chief designers of ENIAC, had visited John Atanasoff and observed the ABC computer at work—and, as was later decided in court, had stolen many of Atanasoff's ideas.

How Computers Changed History

The fact that the use of computers had a great impact on the outcome of WWII is not debatable; the only real question is, how much of an effect it had.

The use of computers like Colossus allowed Allied forces to break German codes, contributing heavily to Allied victories in the war. At the very least, the use of these computers allowed Britain to avoid defeat, and shortened the end of the war in Europe by at least several months, saving untold thousands of lives.

However, another aspect should be considered: Hitler and Nazi Germany failed to use computers. Konrad Zuse asked Germany for funding for his projects, and was turned down. What if Germany had fully funded and used computers for both code generation, code-breaking, and other scientific research, and the Allies had not?

Clearly, it is impossible to predict what the outcome would have been. It is not hard to imagine, however, that any such outcome would have been significant.